1. Background

In the previous blog on the digital acceleration, we discussed how companies are rapidly increasing their investment in technology to increase operational efficiency and gain competitive advantage. A key focus area for these companies is providing access to a single source of the truth through an energy data platform that spans the entire lifecycle of operations.

In this blog, we will discuss the best practices for establishing an enterprise energy data platform and the key industry and technology trends that we see influencing future direction.

2. Energy Data Platform

The energy data platform is a single source of the most trusted data that is available to drive reporting, analysis, decisions, and workflows across all aspects of the business from exploration to engineering and operations. There are typically multiple domains covered in the energy data platform including sub-surface, facilities, operations, finance, customer, and, an area gaining a great deal of attention, ESG.

This does not mean that all the data is managed within a single, physical repository. When we consider the 4 V’s of data management, volume, velocity, variety, and validation, it is clear that trying to manage this in a consolidated store is impractical for even the smallest companies. Nor is the energy data platform a simple data warehouse or data lake (read swamp) where select data is coalesced for specific business purposes.

An energy data platform integrates master data from across the organization based upon business rules, workflows, industry standards, and best practices. It then delivers a consistent interface to access the enterprise data, master, transactional, and unstructured that the business requires. Through this interface, the energy data platform can deliver the data that the business needs through a variety of feeds to lifecycle applications, reporting and analytics tools in addition to warehousing platforms. For these reasons, we would currently consider the OSDU to be a consumer of data from the energy data platform and not an MDM solution.

In the following sections we will discuss the key components of the energy data platform using the EnergyIQ application stack from Quorum Software for illustrative purposes. There are three layers that make up the EIQ energy data platform as illustrated in Figure 1.

Figure 1: EnergyIQ Energy Data Platform

- The foundation of the platform is the Trusted Data Manager (TDM) that contains decades worth of knowledge and best practices for loading, blending, aggregating, and validating multiple sources of data into a single source of the truth.

- IQexchange is the data ingestion and consumption layer that delivers enterprise integration services to synchronize TDM with applications and other data sources and targets.

- IQinsights is a consumer of data from TDM and other sources to deliver business analytics and visualization tools.

These three layers in combination establish an enterprise energy data platform to support the digital transformation initiatives of an organization.

3. Governance

Effective data governance is a critical component of any Digital Transformation strategy. Many companies, however, get paralyzed by data governance, spending years and millions of dollars developing complex governance strategies and supporting organizational structures that remain in impressively bound folders never to see the light of day.

The reality is that most companies are trying to solve the same problems and there is a wealth of governance information available to oil and gas companies through standards organizations such as PPDM and Energistics. This information will provide the necessary foundations without the need to employ a small army of consultants to interview everyone and their grandmother.

In this section, we are focused on those critical decisions that will impact the overall data architecture and integration interfaces that are difficult and expensive to change later.

3.1 Definitions

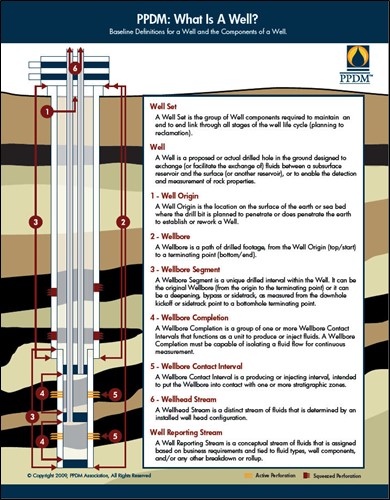

The PPDM ‘What is a Well?’ initiative was designed to provide a baseline set of definitions and relationships for a Well and the Components of a Well that information is typically attached to. The definitions were established by a consortium of both large and small oil and gas companies along with service providers; see Figure 2. The definitions were intended to be relevant wherever the Well is geographically located or whether it was drilled from a large offshore platform or a horizontal Well drilled in an unconventional play.

Figure 2: PPDM What is a Well?

These definitions have gained wide acceptance across industry as they enable companies to establish a platform for communications and build a framework for data integration across the Well lifecycle. The full impact of these definitions is only realized, however, when they are built into the corporate Well Master database and used to integrate data across lifecycle platforms. Within the EnergyIQ Trusted Data Manager (TDM), we created the concept of the Well Hierarchy as the practical implementation of ‘What is a Well?’ and have spent many years designing and building the key components:

- Well Identification

- Well Matching

- Aggregation

- Blending

It is beyond the scope of this blog to discuss each of these individually. Suffice to say that they are critical to the successful integration of data across the well lifecycle, and it is difficult to understand how an MDM platform can be successful without supporting for this structure.

While the ‘What is a Well?’ definitions form the foundation of the MDM solution, there are many others that can cause problems if not addressed early. Here we are thinking of things such as formation, producing zone, producing interval. These are particularly important when it comes to measuring volumes and treatments. It is not just about definitions related to the well, however; PPDM has undertaken an ambitious initiative called ‘What is a Facility?’. This has become very important with the growth in remote measurement and monitoring to tie sensors, meters, and other facilities back to the well and field.

The importance of effective governance cannot be overstated but it is equally important to take advantage of the copious amounts of work that have already been delivered in this space.

3.2 Standards

A key aspect of governance is the agreement on a set of standards to use for data representation. This ensures that queries against the data set return the expected results and that reporting and analytics are consistent.

Identification: One of the most important and least consistently applied is identifiers to key entities such as the well and facilities. In terms of the well, it is important to assign the identifier as early in the lifecycle as possible (at a minimum, as soon as it is being shared across business units) and identifiers should be assigned to each level of the well hierarchy, not just the origin.

The PPDM Global Well Identification guidelines were established by a consortium of operators and provide an excellent framework for identification. We adopted this approach for the EnergyIQ energy data platform over a decade ago and have had no reason to reconsider this decision.

UOM: When companies are integrating data across the enterprise, it is often the case that different asset teams will work in different units of measure. An asset team working in Norway is unlikely to have the same units as a team working in North America, for example. It is also not a good practice to mix units of measure in the Corporate Well Master as queries become more complex and time consuming since conversions need to be performed on the fly. The best practice is to convert the values to a common UOM system, such as metric or imperial and retain the original UOM values with the data. This way the data can be converted back for exchange purposes as necessary.

CRS: Probably the most critical aspect to transformation is the Coordinate Reference System conversion. In this case, it is recommended to store the original coordinates for a point such as the Well surface or Bottom Hole location, and then a set of coordinates converted to a database standard, such as WGS84, for consistent query and mapping. At EnergyIQ, we store 3 sets of coordinates for every point; the original, a regional standard, and then a global standard. To perform the conversion, companies typically use a third-party tool such as Blue Marble or ESRI since the mathematics in the conversion requires a high degree of rigor. Applying the right conversion to coordinates is extremely important and yet remains a common source of error that has significant implications.

Master Reference Values: An area that often gets overlooked in terms of data exchange is reference values. The reality is that there are inconsistent names used to define formations, or describe fluid types, for example, across multiple applications. This makes consistent query and reporting extremely complex, if not impossible, and can lead to miscalculations. It is important to establish enterprise standards for key reference values and maintain cross-reference between source systems to maintain consistency. There are many good sources of reference values available so, once again, it is not necessary to build these from scratch.

Note that one of the most important reference standards relates to well status and; we will consider this in detail in the next section.

3.3 Data Objects

While not typically considered part of governance, we include a discussion of data objects as a way to consistently transfer and manage data in this section as a reflection of the importance of standardized definitions at an industry wide level.

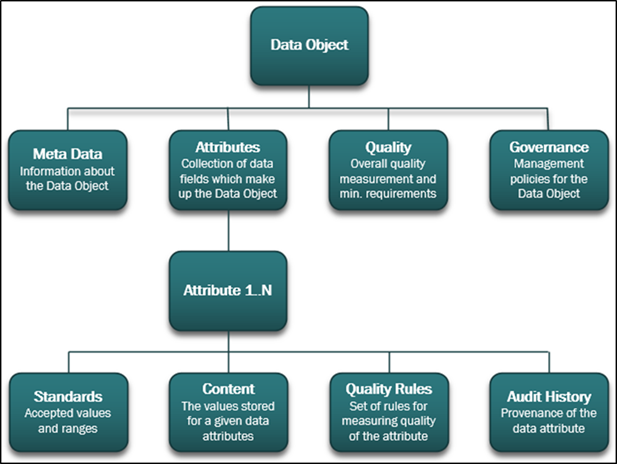

A Data Object is defined as a collection of data attributes combined with the information required to manage the object to support business workflows; see Figure 3. This includes the provenance and quality of the data. A key feature of a Data Object is that it is independent of technology; so while most objects are defined and implemented as JSON this should not be a requirement.

One of the challenges with the PPDM data model is that the definition is tied to the technology. However, at EnergyIQ we created technology independent data objects based upon the thirty years of knowledge captured within PPDM. This is similar to the approach taken by the OSDU and there is a high degree of alignment between the two.

Figure 3: Data Object Structure

The EnergyIQ IQexchange layer transfers data objects between applications and data stores with the TDM through an API to abstract the underlying model from the access layer. The data objects have the same structure regardless of the target data store with an agent being responsible for mapping the content to the store. This approach ensures that any application can consume or generate objects for transmission through an agent that is independent of IQexchange making this a fully flexible platform. This approach also ensures that data objects can be extended and new ones created without affecting existing agents which is crucial in a rapidly changing industry.

Establishing an industry agreed upon set of Data Objects for data exchange and storage will eliminate many of the challenges that we face today and have a similar impact that was experienced by the financial industry when they adopted a similar approach.

3.4 Best Practices

Throughout this section, and this blog, we have discussed best practices in terms of data management and consumption. It is worth pointing out that these are only a small subset of the wealth of knowledge that is available in the public domain and through the standards organizations. We have built a great deal of this into the EnergyIQ energy data platform and to continue to productize best practices to ensure that it becomes repeatable and that the knowledge is not lost from the industry.

4. Master Data

There are numerous definitions for master data depending upon the context in which it is being considered. For our purposes we will define master data to be the set of attributes that establish the status of the business at any point in time and the relationships between the key business entities.

“Master Data is the foundation for the Digital Transformation,” Gartner

There are different domains for master data including well, facilities, operations, finance, customer, and land. In this discussion. We will focus on the well domain since everything ultimately ties back to the well and this is typically the domain that where most operators start their master data management initiatives.

In our experience, operators typically start with around 30 master attributes in the well domain and, of these, about 80% are consistent between companies. Where companies have identified hundreds of master attributes, it is questionable as to whether they should all be classified this way and the success of the project is likely to be compromised i.e. likely to fail.

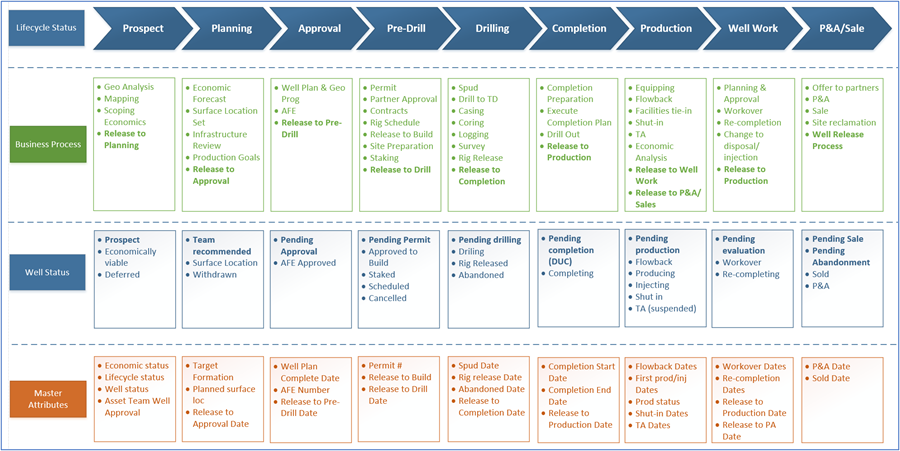

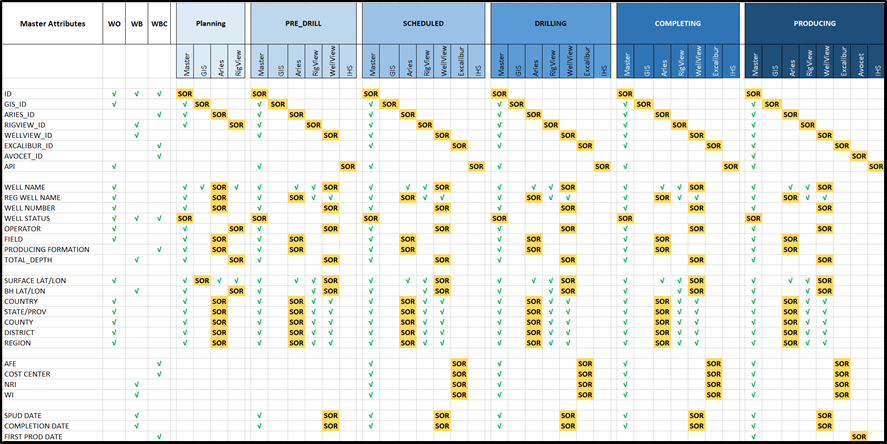

4.1 Trust Matrix

Note that while the set of master attributes are consistent over the lifecycle of the well, the content of each master attribute can, and probably will, change. For example, early in the lifecycle, a conceptual or planned well may have a temporary name. Later, when it is associated with an AFE and a permit it may be assigned a permanent name along with a unique identifier. Operators must, therefore, identify the System of Record (SOR) for the attribute at each phase of the Well lifecycle. Furthermore, if that attribute changes then it is will be necessary to update other sources of the same attribute. Figure 4 illustrates a trust matrix that defines the set of master attributes, the phases of the well lifecycle, and the SOR for each attribute at each phase.

Figure 4: Trust Matrix

This matrix also illustrates the importance of being able to synchronize data between applications and the master data store. A platform such as IQexchange is essential to be able to seamlessly exchange data and to trigger activities based upon the exchange. Simple ETL-based solutions are not able to handle the complexity of complex application APIs.

4.2 Well Status

One of the most important master attributes is Well Status yet it does not get as much attention as it should. It is not just important for reporting purposes and defining plot symbology, it is a key driver for workflow activities; when the status of a well changes it is the trigger for data movement, the execution of business rules that comprise workflow activities, and enterprise-wide reporting.

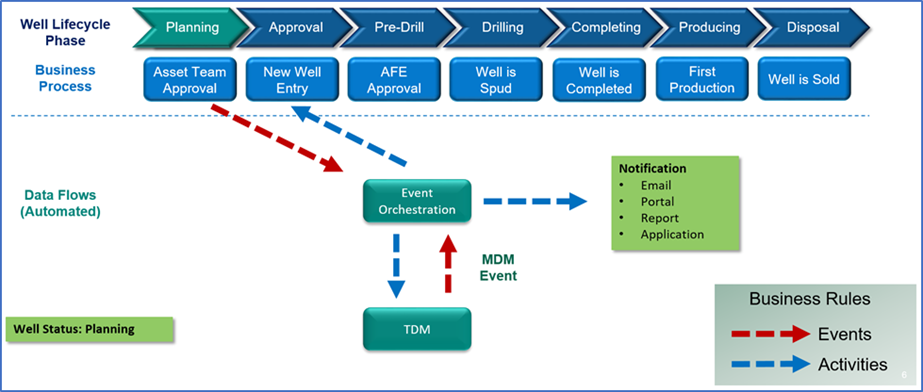

When helping companies implement an energy data platform, we first define the phases of the Well Lifecycle as illustrated in Figure 5; there will typically be between 6 and 9 of these depending on the size and structure of the organization. We will then establish the Well Status values for each phase of the lifecycle based upon an analysis of key business processes.

Figure 5: Well Status across the Well Lifecycle

Once this is completed, we need to determine which attribute (or attributes) is used to establish the Well Status since most applications do not track this. These attributes will be a subset of the overall master attributes and will be used to advance the status of the well and trigger additional activities as we will discuss in the next section.

It should also be noted that certain well status value changes will also trigger an advance in the Well Lifecycle phase which is additionally important as it typically reflects a change in responsibility from one business entity to another. This transfer of responsibility is typically where errors happen and automating the data flows and activities associated with this transition reduces risk and increases efficiency.

These rules can then be implemented within the master data store so that they are automatically executed whenever the associated data changes are detected.

4.3 Matching, Aggregation, and Blending

As we discussed, master data management involves loading data from multiple sources and then applying business rules to generate a single source of the truth. The business rules can be classified as matching, blending, and aggregation.

Matching: As data is loaded from different sources, Well Matching becomes increasingly important to ensure that the correct identifier is assigned to the right record. This matching must also take into account the level of the Well Hierarchy since different applications deal with data at different levels even though they always refer to it as a Well. To meet these requirements, the Well Matching routines need to be able to support:

- A set of configurable parameters for matching

- Tolerances e.g. surface location within 5 feet

- Weighting of the matching parameters

- Match percentages e.g. 5 parameters out of 8 meet the match criteria therefore there is a 70% match (after weighting)

- Thresholds to define whether Wells match or not

Well Matching is not an exact science and so it is important to maintain an audit history along with the corresponding tools to be able to review and iteratively improve the matching process over time. These requirements and the need to learn over time lead towards Artificial Intelligence techniques that can create new rules based upon feedback loops to increase confidence in matching.

Aggregation: One of the main challenges of integrating data from multiple sources is that each source may define a Well differently. For example, if we load:

- IHS commercial data in the US, it is delivered with a 14-digit API as the primary identifier. This typically identifies a completion, but not always.

- Proprietary WellView data, the records are defined at the Wellbore level

- Proprietary Avocet data, the records are defined at the Wellbore or the Completion level

Since we do not want ‘floating’ Wellbore or Completion records without a corresponding Well Origin parent, we need to manufacture the parent record by applying a set of business rules to create the parent from information in the child. We do this through a process termed by EnergyIQ as Aggregation in which key attributes are rolled up to the parent. Aggregation depends upon a set of business rules that determine which attributes will be promoted to the higher-level record from the lower level records.

This can be a complex process but one that is necessary to maintain integration integrity.

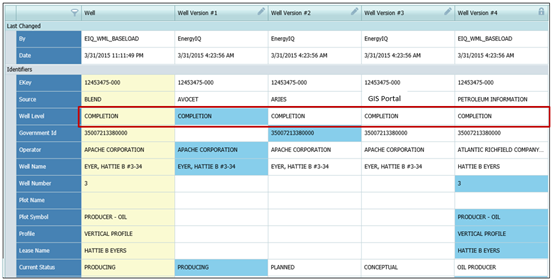

Blending: The concept of blending data from multiple sources has been around for a long time. It is important as it involves the application of business rules to create a ‘Golden’ record that can be used by the business with confidence that it is the best information available and meets approved governance and quality standards.

Blending is the process of creating a single, most trusted record at a given level in the Well Hierarchy from two or more records at the same level in the Well Hierarchy from different sources. Figure 6 illustrates multiple sources of Well Header records that have been blended to create a most trusted version for consumption by the organization.

Figure 6: Multi-Source Record Blending

Note that the rules for blending need to be flexible as they might vary by geographic location, phase of the Well lifecycle, or whether the well is operated or interest only.

The EnergyIQ rules for matching, blending and aggregation have been developed over many years of working with a broad range of operators and dealing with their data management challenges. These rules are imperative for accurate data integration across the lifecycle and cannot be ignored.

4.4 Data Validation

We will briefly touch on data validation here even though it is a field that probably deserves its own blog or series of blogs.

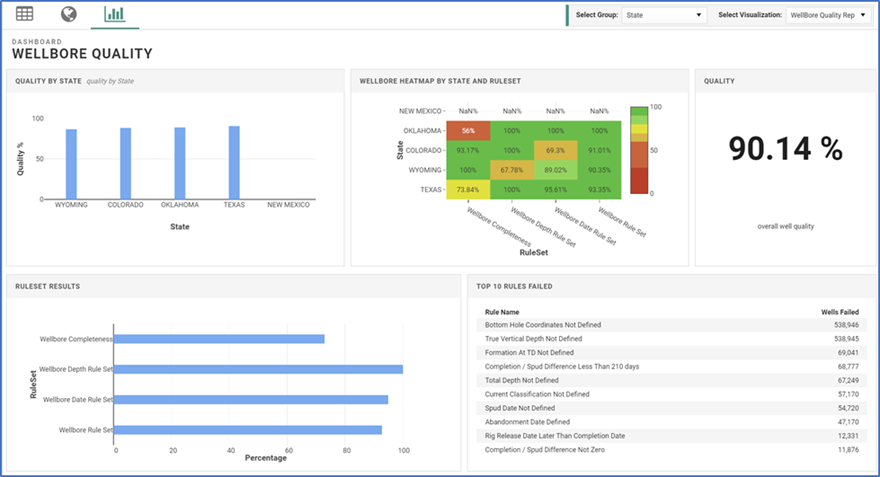

Data quality is obviously hugely important when building out a master data store. There are literally thousands of data quality rules that can be implemented as part of any solution to validate data. Many of these rules are publicly available from standards organizations such as PPDM and Energistics; many are also derived through experience, typically based upon an analysis of what went wrong in a situation. The challenge is typically less about what rules to apply than it is with how to apply them and how to best interpret the results as part of a decision-making process.

At EnergyIQ we run sets of data quality rules against a Data Object and store the quality results as part of the object so that they can be included in analysis of the data as an indicator of the risk of decisions made using that data. A separate Data Quality Service receives a data object and then runs the identified set of rules for that object and returns the quality results to the calling application. More than one set of rules can be applied to an object; these could be a facet of quality such as timeliness, accuracy or completeness or they could be specific to a discipline such as geoscience, engineering, or finance.

It is worth noting that the EnergyIQ solution stores the data objects and associated quality results as an Elastic index which is a leading technology for the storage and instantaneous retrieval of vast quantities of data. Without using a search technology platform such as Elastic, it would not be possible to store the results and generate dashboards such as the one illustrated in Figure 7.

Figure 7: Data Quality Dashboard

Having a clear understanding of the quality and history of data is critical to reducing decision making risk and support workflow automation that we will discuss in the next section.

5. Workflow Automation

One of the key digital trends is to establish a connected energy workplace where different areas of the business are connected through common data, workflows and technology. The work that we discussed in the previous section on master data, establishes the foundation for the connected energy workplace through workflow automation.

By synchronizing master data across the different business units, we can recognize when changes occur and execute business rules that respond to these events by executing a series of activities; see Figure 8.

Figure 8: Workflow Automation

As an example, one event that typically causes a lot of angst over the lifecycle is defining when a new well comes into existence and what activities need to be triggered at that point. Initially a well is typically just a surface location with an identifier on an existing lease or prospect that may have reserves assigned to it. While this exists within the exploration group it may not be recognized as a new well at the enterprise level. In many instances, it is only defined as a new well and assigned a unique identifier when drilling is planned and an AFE has been generated. The challenge is that the connection between the conceptual well and the planned well is often broken at this point despite the fact that there is valuable information assigned to the original location. Hence it is important to establish automated workflows as early in the lifecycle as practical and assign a unique identifier at this time as we discussed earlier.

There are hundreds of business events that occur over the lifecycle of the well and it can take some time to build up all of the business rules. However, this is definitely a case where you don’t want to try and boil the ocean; select those events and activities that typically cause problems and focus on resolving those. You can build up the business rules library as you go. Also, don’t re-invent the wheel since there is a great deal of industry knowledge available. One underused resource is the PPDM business rules library originally developed by Pat Rhynes.

Workflow automation is the nirvana that operators, and vendors, are striving for. It increases efficiency by reducing downtime, minimizing errors and rework, enables accurate and consistent reporting, and breaks down the silos between organizational units.

6. Looking to the Future

These are interesting and challenging times for the oil and gas industry and it is clear that significant change is underway on many fronts. Operators are increasingly turning to digital technology to prepare for an uncertain future with the foundation of this being access to a single source of the most up-to-date and trusted data or the energy data platform.

Access to enterprise-wide integrated data has long been a challenge for an industry that has historically worked in distinct business units and managed data in silos. However, innovations in data management thinking and technology over the last few years both within the oil and gas industry and in peer industries has resulted in advances that are rapidly breaking down the barriers.

As part of their ongoing research, Quorum Software has interviewed many operators, attended conferences, and reviewed literature to understand the digital trends that will shape the future roadmap of the company. In this section we will discuss the key trends that relate to the energy data platform.

6.1 Standardized Data Storage and Access

The OSDU initiative spearheaded by Shell and other majors gathered a significant amount of momentum in a very short space of time with over 150 members of which close to 40 are operators.

The goal is to establish a standardized format for storing data in the cloud along with the APIs for data ingestion and consumption. The major cloud providers including Microsoft, AWS, Google, and IBM have signed up to deliver the platform as a service along with other major technology providers including Dell.

This approach is significantly different from previous standards initiatives in one major respect and that is the ingestion and consumption APIs. The PPDM data model is a well-respected standard for storing data but has two significant issues; one is that the model is tied to relational technology and the second is that implementers are free to load data to the model in any way that they see fit. This causes a major issue when trying to develop applications against the model in that 10 different instances might store data in 10 different ways.

There are challenges ahead for the OSDU associated with trying to corral a community with day jobs to develop, maintain, and support complex software through an open source structure. Regardless of this, the support for the initiative across all industry is a clear message in terms of where things are heading.

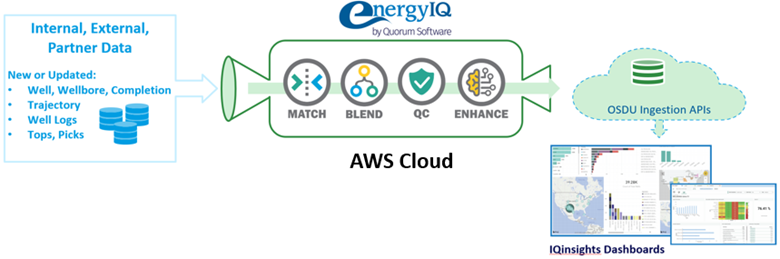

It is also evident that the OSDU is not a panacea for data management and that the functionality provided by solutions such as EnergyIQ’s TDM and IQexchange are still needed to manage the data effectively to a store such as the OSDU. Figure 9 illustrates a workflow in which data is prepared by TDM for delivery to an OSDU implementation that was developed as part of a proof of concept with AWS and Pariveda.

Figure 9: EnergyIQ and OSDU in the AWS Cloud

6.2 Collaboration

Operators are being overwhelmed by the pace of technology change and are increasingly turning to partnerships with vendors to help them navigate the transition.

“Things are changing so fast that we can’t keep up. We can no longer do it all ourselves and rely on collaboration with and between vendors.” Lee Holder, VP Upstream Digital Transformation, Shell.

We are seeing examples of this such as where Microsoft have signed an alliance with WPX to guide them through their digital transition. Beyond the OSDU, we are also seeing alliances between vendors to deliver integrated solutions to industry and this will increasingly become the norm.

Interestingly, we are also witnessing more collaboration between operators in areas where they believe that the benefits of working together outweigh any potential competitive advantage. We see this in the form of data sharing, participating in standards definitions such as the OSDU, and joint initiatives with vendor consortiums.

It is evident that the whole area of data management is one where the industry feels that it is better to work together and to differentiate on the analysis of this data. This is a significant change from the past and one that will have a hugely beneficial impact on the future of the industry.

6.3 Cloud Migration

It is obvious to anyone in the industry that there is a significant migration to the cloud and that SaaS based models for software deployment are replacing legacy applications at a rapid rate.

What is interesting, and potentially more challenging, is the migration of huge volumes of data to the cloud with the associated latency and cost issues. The support for the OSDU initiative by 4 of the leading cloud providers makes it clear that they consider this to be a lucrative market and one that justifies significant investment on their part.

While there is some reluctance on the part of some operators to move all their data to the cloud, it is inevitable, in our opinion, that the associated benefits of availability, scalability, and access to advanced services such as AI and ML will ensure that this will become the norm of the future as prices continue to fall.

6.4 Application Innovation

Access to a centralized data store in the cloud is driving innovation in the application space. By having a standardized API to access the most trusted data, a significant barrier to entry for smaller software developers has been removed. Rather than have to focus a large part of their development effort on loading and managing a copy of the data that they need, startups can focus on developing innovative tools for the visualization and analysis of this data that will deliver competitive advantage to their client base.

This is going to have a major impact on traditional software vendors and will be of long-term benefit to the industry. Quorum Software has made their own adjustments to this new world through the development of the OnDemand software suite.

7. Summary

Establishing an energy data platform is crucial to the success of digital transformation initiatives. However, loading all of the data into a data warehouse or lake and expecting analytics software to be able to make results in the wild west of data management.

In this blog, we have identified the key components of the energy data platform and the best practices that are associated with this. While building out this data store at an enterprise level can seem daunting, the technology, expertise, and, most importantly, the drive to be successful are all in place.

As we have tried to emphasize, the keys to success are to adopt a phased approach by focusing on the most important areas first and to leverage the vast amount of IP that is available in the public domain, Oh, and also take a good look at Quorum’s EnergyIQ software; it solves the hard problems that other solutions don’t even know is a problem.

Browse more resources to gain industry insights about technology trends from IT leaders.

About The Author

Steve Cooper is Vice President of Digital Strategy for Quorum Software with responsibility for researching the impact of industry and technology trends. Prior to its acquisition by Quorum in 2020, Steve was CEO and founder of EnergyIQ where he developed a sophisticated Well Master Data Management platform that supports critical decision-making at many oil and gas companies today. He is a past CIO of IHS Energy, Chief Communications Officer and Board Member with the PPDM Association and has additionally served on the Board of Directors for two publicly-traded gold mining companies.

Steve has been published in numerous journals and has presented at industry conferences on subjects including data quality, governance, master data management, analytics, and visualization. Recently, Steve joined the Data Analytics advisory board at Denver University and is an occasional contributor at the Colorado School of Mines.

Previous Page

Previous Page