Data Governance for Upstream Operators: Master Data, Controls, and a Single Source of Truth

At a broader level, suites like Upstream On Demand are designed to connect upstream workflows from field to office rather than leave each team working in isolation. Data governance becomes necessary when those workflows rely on the same records but manage them in different systems without consistent control.

Most upstream organizations do not struggle because they lack data. They struggle because key records move across systems without a shared structure for ownership, validation, and change. The result is friction across field operations, accounting, land, and reporting as each team works from slightly different versions of the same data.

Key Takeaway

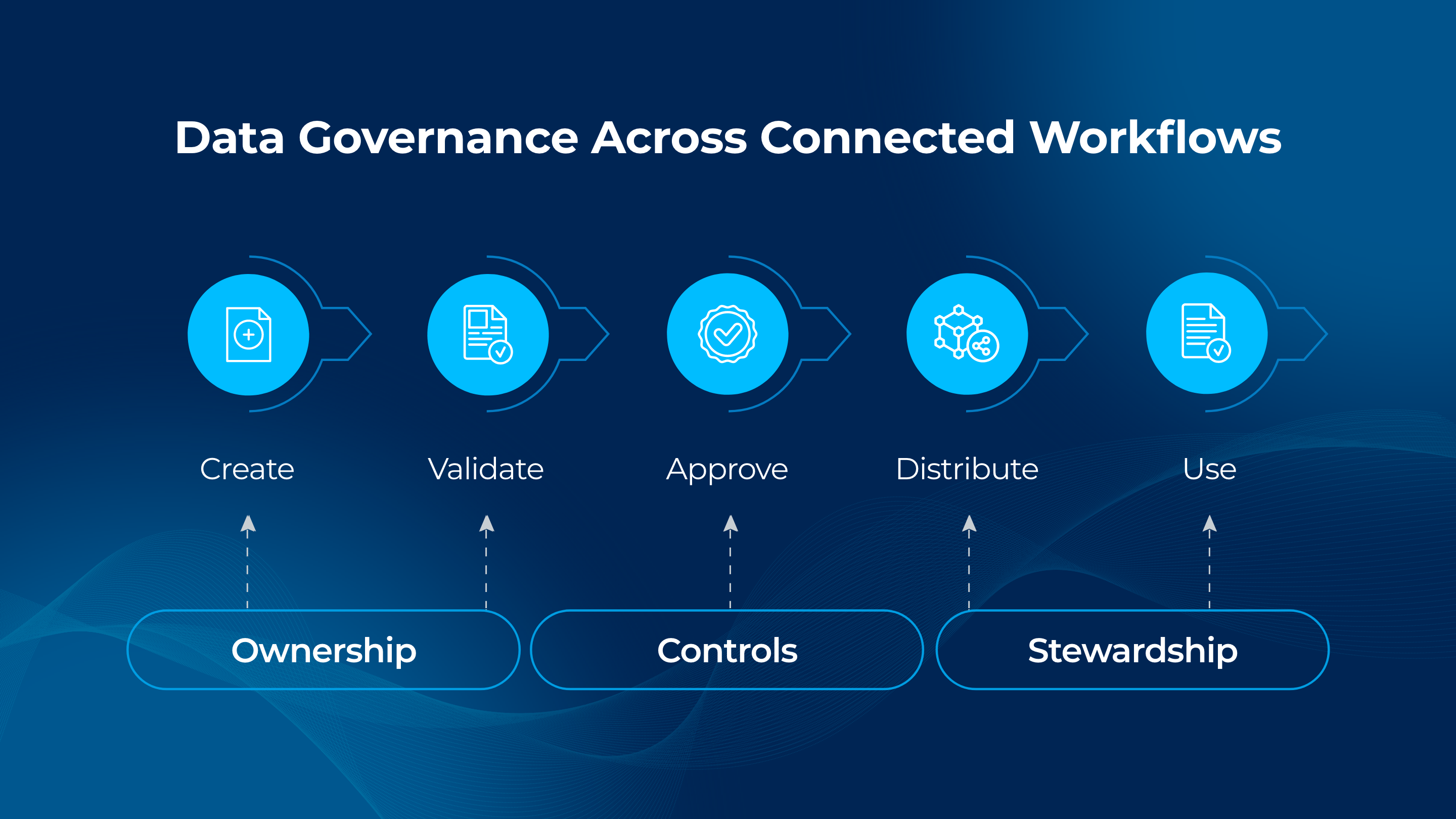

Data governance in upstream environments is a control layer across connected workflows. A single source of truth depends on consistent ownership, validation, and stewardship as data moves from field capture through accounting and reporting. When governance is aligned with how workflows interact, ambiguity decreases and downstream processes remain stable.

How Data Moves Across Upstream Workflows

Upstream operations depend on a shared set of master and reference data that flows across multiple systems. Well identifiers, ownership interests, facility structures, and accounting hierarchies are created, updated, and consumed at different points in the workflow.

Field data is captured and associated with assets. That data feeds production accounting, where volumes and ownership are applied. The same records support joint interest billing, regulatory reporting, and internal analysis. Each step depends on the integrity of the same underlying data.

When these workflows are not aligned, data begins to diverge. A change made in one system may not propagate cleanly to another. Teams compensate by maintaining local copies, adjusting reports, or reconciling differences after the fact.

Where Variability Enters the Process

Variability enters when control over shared data is inconsistent across systems and teams. Differences in how records are created, updated, or validated introduce misalignment that expands as data moves downstream.

Common points of variability include:

- Inconsistent ownership of master data

- Uncontrolled changes to key identifiers or attributes

- Lack of validation between systems

- Delayed propagation of updates across workflows

These conditions do not remain isolated. They compound as data moves from operations into financial and regulatory processes.

Impact on Operational and Financial Outcomes

When shared data is not governed consistently, downstream workflows absorb the effort required to correct it. Production accounting teams spend more time reconciling volumes and ownership. Joint interest billing carries higher dispute risk. Regulatory reporting requires additional validation before submission.

The impact shows up as increased manual effort, slower reporting cycles, and reduced confidence in the numbers being produced. Teams spend time confirming which record is correct instead of acting on the information.

In large-scale upstream environments, such as operators managing more than 1,000 wells, governance challenges are amplified by the sheer volume and velocity of data moving across systems. In practice, organizations that unified operational workflows across these assets were able to enforce consistent handling of well, ownership, and production data from field capture through accounting. By embedding control points directly into these workflows, they reduced divergence between systems and significantly lowered the need for manual reconciliation. This led to more reliable production and financial reporting, along with faster cycle times for downstream processes. As governance becomes integrated into daily operations at scale, it shifts from a reactive function to a foundational layer that sustains data integrity across the enterprise.

Where Issues Typically Surface

Issues rarely originate in reporting. They surface there.

The root cause is usually earlier in the workflow, where data is created or modified without consistent controls. A well identifier may differ between systems. Ownership percentages may not align after a change. Facility relationships may be structured differently across applications.

By the time those inconsistencies reach accounting or reporting, they require reconciliation rather than correction at the source.

The Role of Systems and Data Control

A workable governance model connects data control to how workflows interact across systems. It defines how critical records are created, validated, and maintained as they move from one process to another.

A structured model includes:

- Clear ownership for master and reference data

- Validation rules that check consistency across systems

- Controlled change processes with approval and traceability

- Stewardship routines that monitor and resolve exceptions

| Governance Element | What It Controls | Operational Impact |

| Data ownership | Creation and modification rights | Prevents uncontrolled changes across workflows |

| Validation rules | Cross-system consistency checks | Identifies issues before downstream impact |

| Change control | Approval and audit tracking | Ensures traceable updates |

| Stewardship routines | Ongoing monitoring and correction | Maintains long-term data integrity |

These controls allow data to move across workflows without losing consistency.

What a Scalable Governance Process Looks Like

Governance becomes scalable when it is embedded into the workflow rather than applied after the fact. Data is validated when it is created, changes are controlled when they occur, and exceptions are resolved with clear ownership.

A practical process follows three steps:

- Identify the master and reference data that flows across workflows

- Define ownership and approval paths for changes

- Monitor data quality and resolve exceptions continuously

This approach keeps the record stable as it moves from field systems into accounting and reporting environments.

Improving Accuracy Across Workflows

Data governance in upstream operations is most effective when it reflects how data moves across connected workflows. Field capture, accounting, and reporting all depend on the same records, and inconsistencies at any point create downstream effort.

When governance is applied as a control layer across those workflows, data remains consistent as it moves through the process. The result is improved reporting accuracy, reduced reconciliation, and greater confidence in operational and financial outcomes.

Frequently Asked Questions

Why is data governance important in oil and gas?

Upstream operations rely on shared data across field, accounting, land, and regulatory workflows. Without governance, inconsistencies in key records create reconciliation effort, reporting delays, and increased dispute risk.

What is a single source of truth in upstream operations?

A single source of truth is a governed set of master and reference data that remains consistent as it moves across systems and workflows. All teams rely on the same controlled record rather than maintaining separate versions.

What is the difference between data governance and data management?

Data governance defines ownership, controls, and validation for how data is created and maintained. Data management includes the broader handling of data across systems. Governance ensures that shared data remains consistent and traceable as it moves through operational workflows.

Visit the Upstream On Demand Suite for more information.

Previous Page

Previous Page